In Today’s post, I would like to show you how to configure Microsoft Clustering across Virtual Machines with shared disk - so-called Cluster in a Box(CiB). Typical use case for Microsoft Cluster / Failover Cluster is to have cluster nodes with shared storage. Typical use case for Cluster in a Box is when you have to provide availability on Operating System level for application/service and you can’t use Raw Device Mapping in your environment.

Environment and configuration

VMware ESXi - version 5.5.0 build 1623387 (Update 1)

Operating System - Microsoft Server 2012 R2 Standard

Cluster - Two Virtual Machines with shared disk

Virtual Machines - each Virtual Machine with two network interfaces Lan and Cluster.

DRS rule - it is mandatory to have both Virtual Machines on the same host. I created rule Keep Virtual Machines Together.

Prerequisites configuration

I joined two Virtual Machine into a domain and I used following network configuration:

WMCLU01:

- LAN: 10.0.0.16

- Netmask: 255.255.0.0

- Gateway: 10.0.0.1

- Cluster: 192.168.1.1

- Netmask: 255.255.0.0

WMCLU02:

- LAN: 10.0.0.17

- Netmask: 255.255.0.0

- Gateway: 10.0.0.1

- Cluster: 192.168.1.2

- Netmask: 255.255.0.0

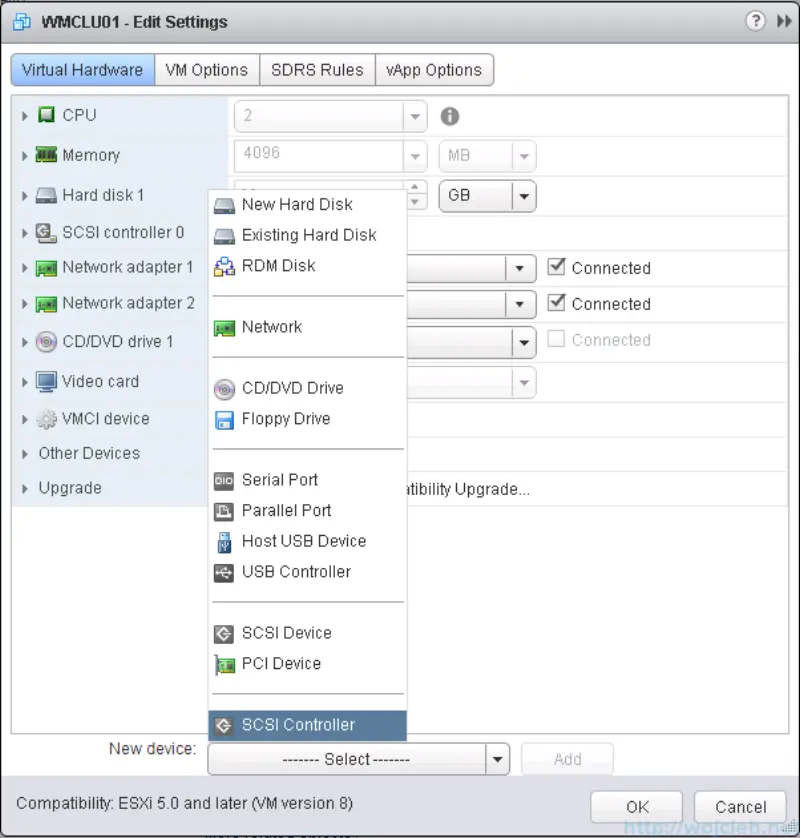

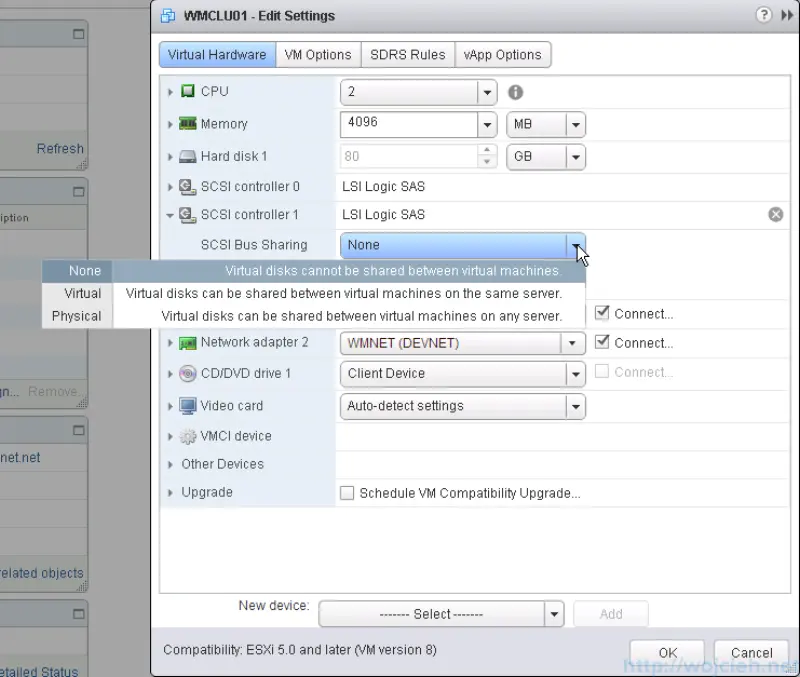

I added new SCSI Controller - LSI Logic SAS and I changed SCSI Bus Sharing from **None **to Virtual.

This allows us to share attached disk to another Virtual Machine.

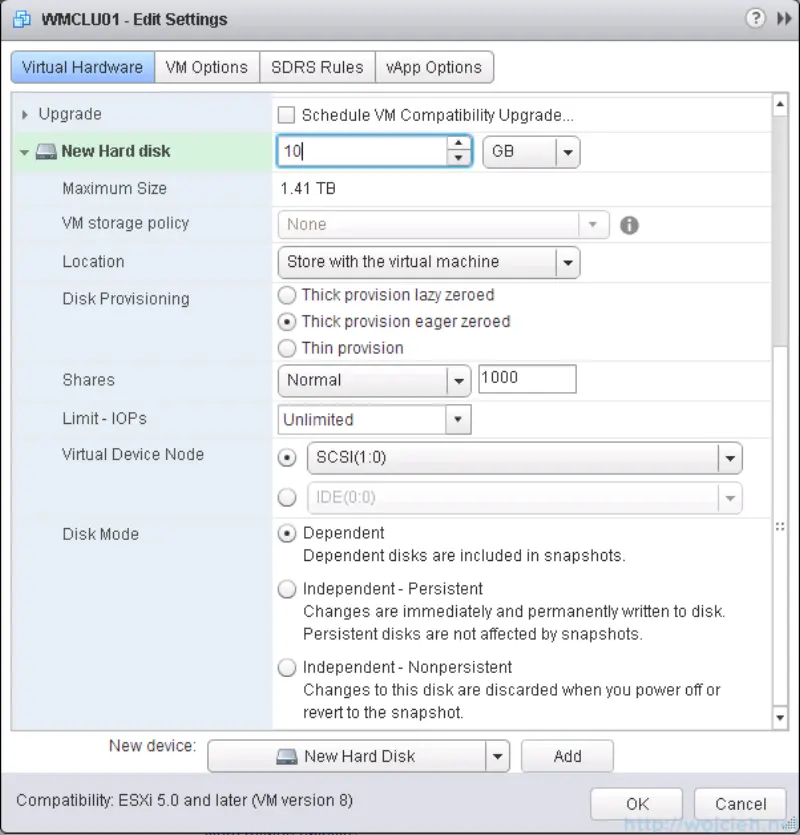

Now we need to add the new disk to Virtual Machine which will be shared across Virtual Machines. New disk type must be Thick provision eager zeroed. Otherwise, you will be not able to use Microsoft clustering.

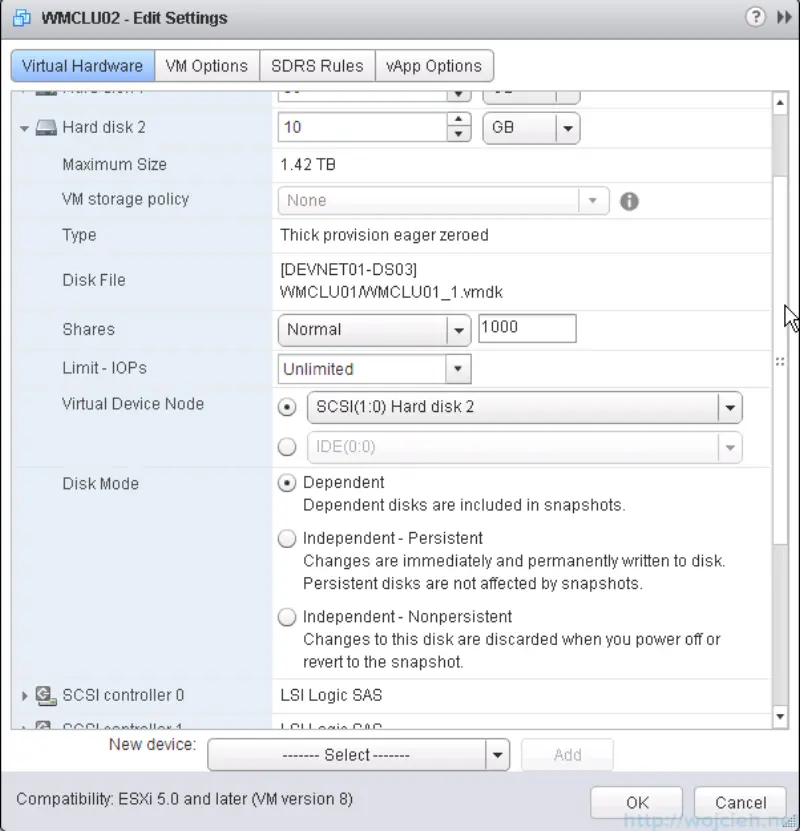

We have to do same steps with second cluster node - in my case WMCLU02. I added new SCSI controller with SCSI Bus Sharing type Virtual.

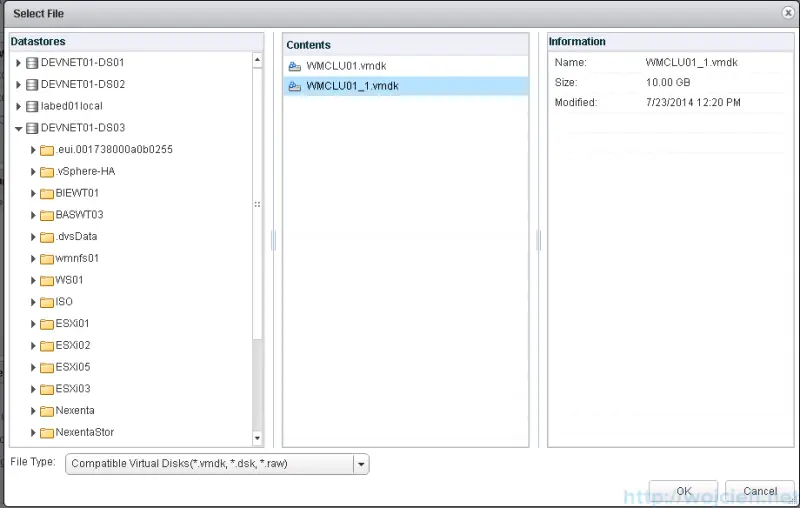

Now we need to add **existing drive **from first cluster node to second cluster node to SCSI controller.

We have to assign it to SCSI controller which type is Virtual.

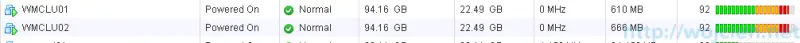

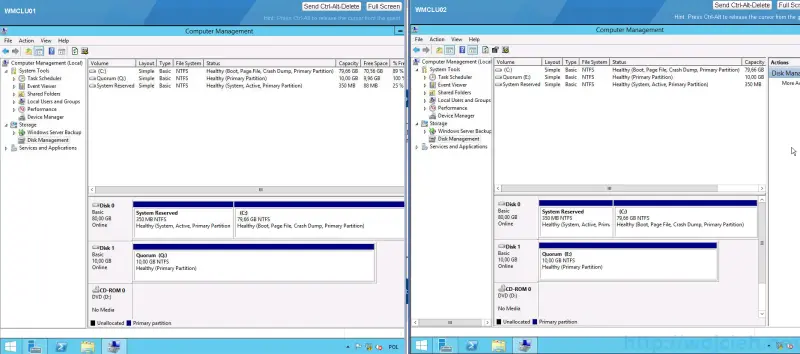

The first test of configuration is to start both VM and check if the disk is seen on both cluster nodes.

After logging in into both cluster nodes I created Quorum drive and it is visible on both sides.

Microsoft Clustering configuration

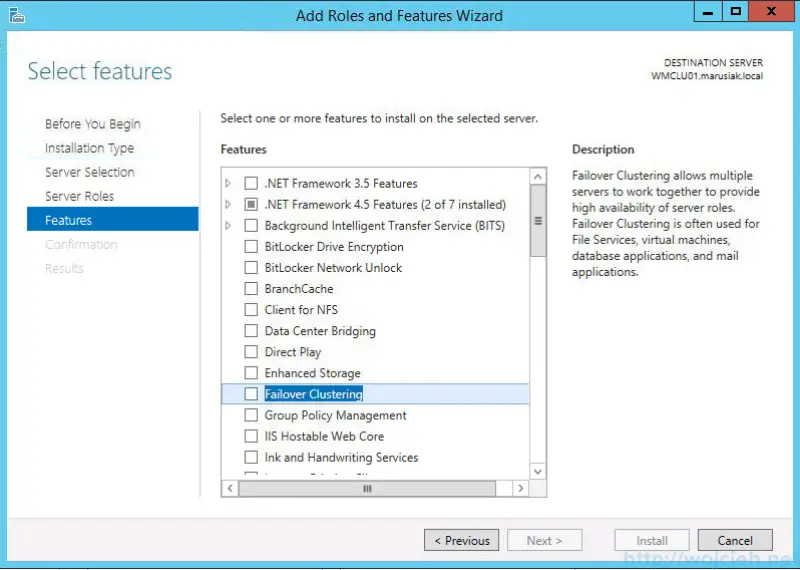

Start server management console and in **Features **select **Failover Clustering **and accept other features to install.

Click Install and wait for the wizard to finish.

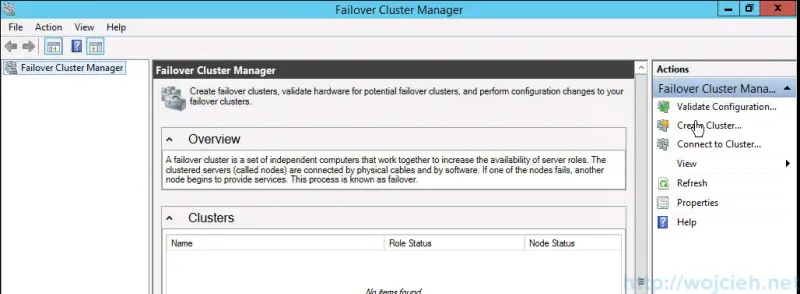

Start Failover Cluster Manager and click Create Cluster.

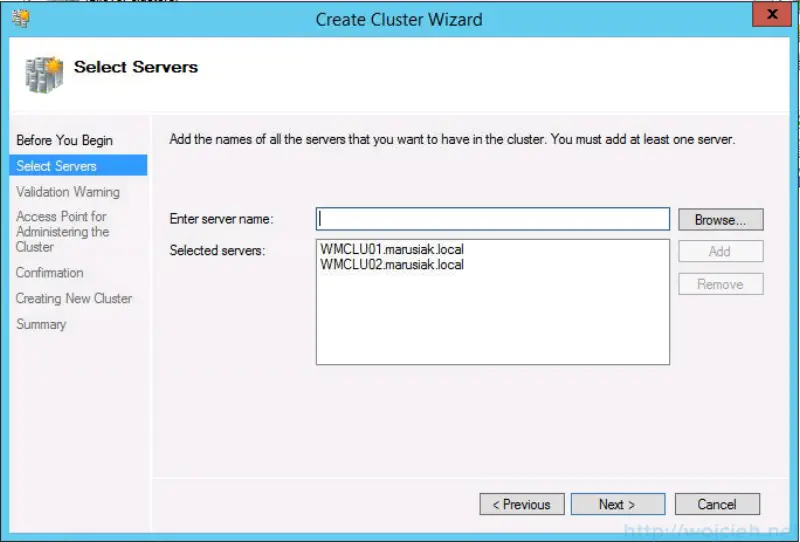

Add cluster nodes and click next.

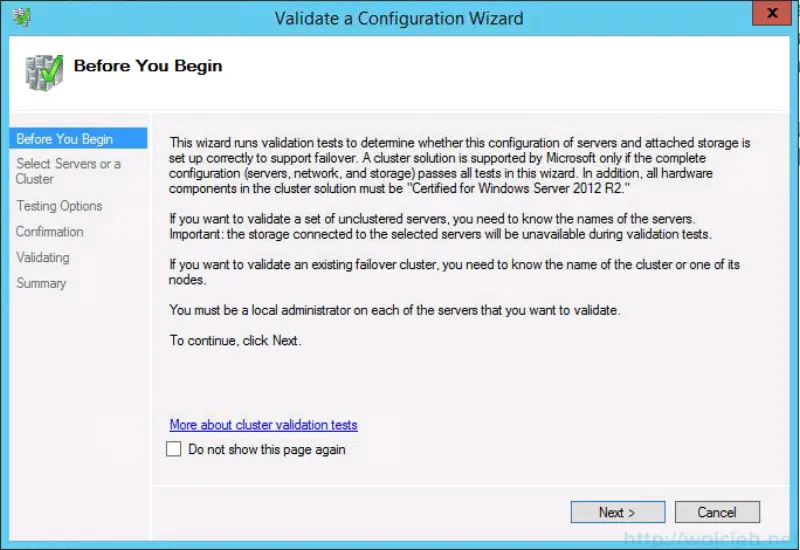

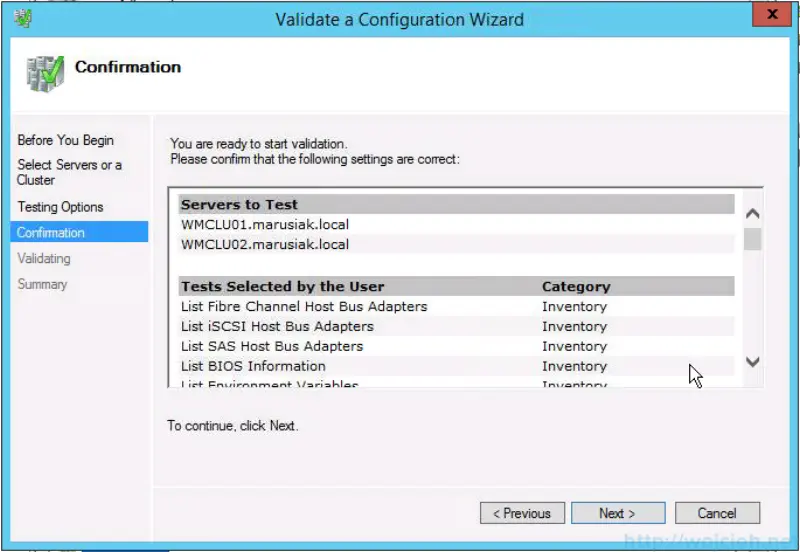

You will be asked to run Cluster validation wizard. Use it to check if all components are configured well.

Selected Run all test (recommended)

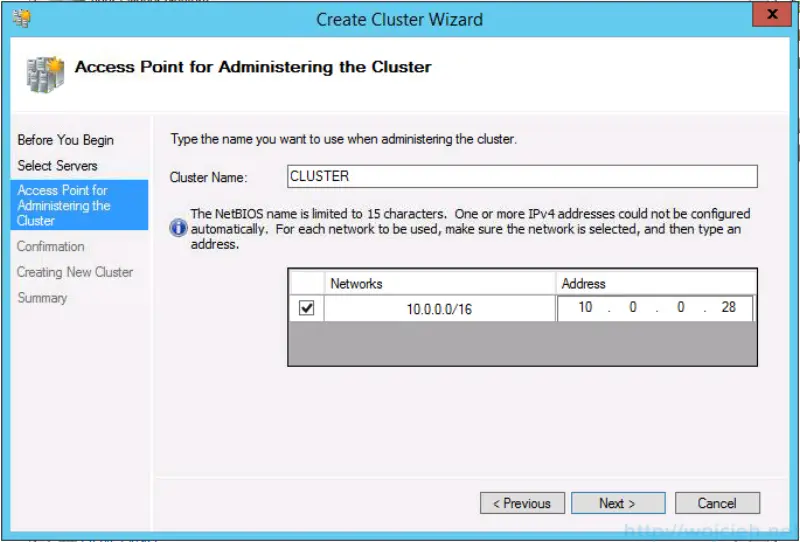

Enter cluster name and provide Cluster IP address.

Wait for Cluster wizard to finish.

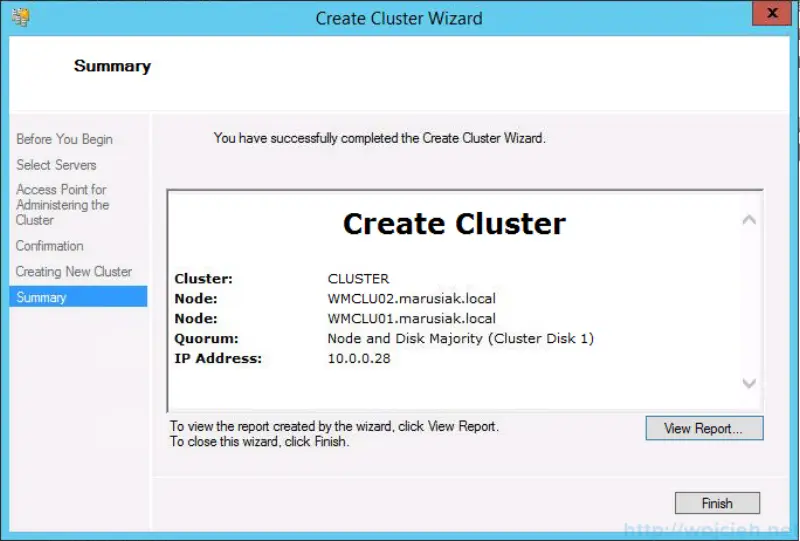

As you see on screenshot cluster was successfully created.

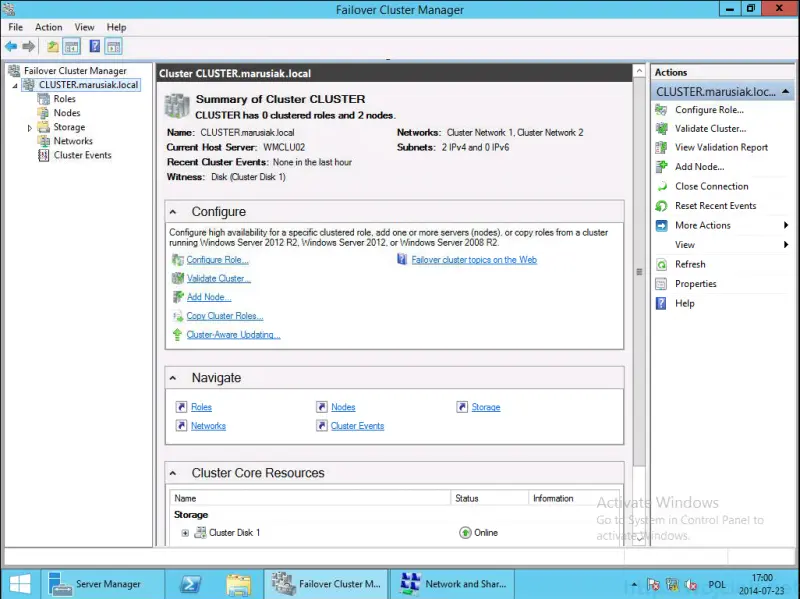

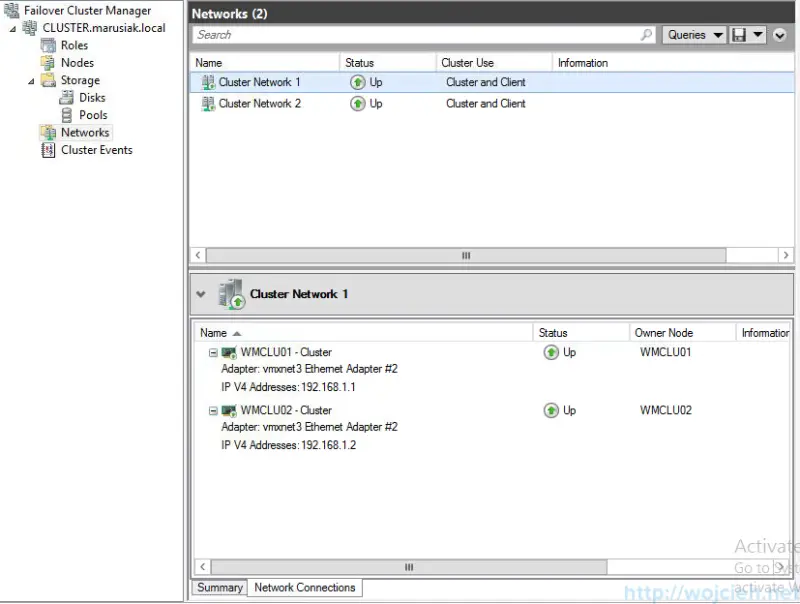

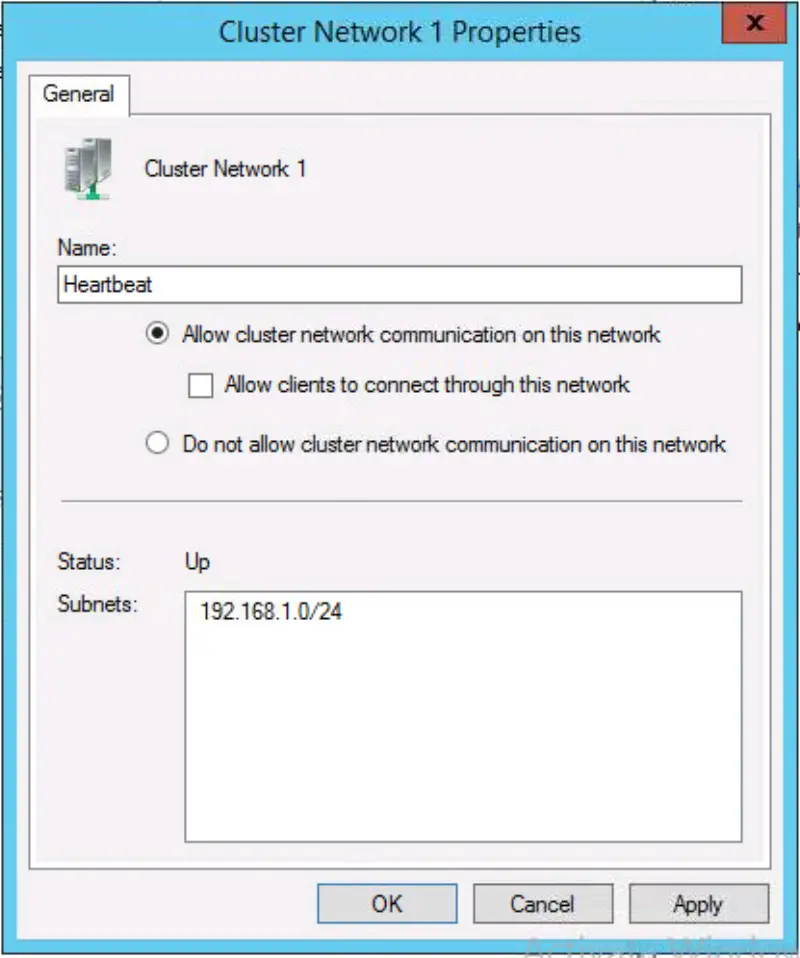

The last thing we have to do is to adjust networks in the cluster. We will ensure that cluster network (heartbeat) will be not used in normal communication. Go to Networks, select Cluster Network 1 and expand network connections.

On the network which will be used for cluster heartbeat go to properties and deselect Allow client to connect to this network. You can rename network itself - this is not mandatory but might be useful when troubleshooting issues.

The second network should have Allow client to connect through this network checkbox selected.

Final thoughts and useful links

I didn’t configure any roles because this exceeds my knowledge. There is plenty of other sources where you can find guides how to add roles to the cluster.

Here you can find useful links from VMware and Microsoft about clustering with Microsoft technologies.

VMware: Microsoft Clustering on VMware vSphere: Guidelines for supported configurations

VMware: Setup for Failover Clustering and Microsoft Cluster Service